If you run a small or mid-sized WordPress blog, you don’t need a PhD in statistics or a unicorn-sized budget to squeeze more clicks, time on page, and organic traction out of your content. I’ve watched tiny, well-structured experiments—headline swaps, a button color change, a clearer subhead—add up to meaningful gains across weeks. Think of A/B testing like a slow-cooked sauce: a little heat, consistent stirring, and patience turns modest ingredients into something deliciously effective. ⏱️ 10-min read

In this guide I’ll walk you through a practical, repeatable WordPress A/B testing workflow: defining goals and hypotheses, choosing low-hanging experiments, wiring the right tools into your site, launching hour-long quick wins, interpreting results like a grown-up, turning winners into SEO-friendly updates, avoiding common mistakes, and shipping a two-week sprint template you can copy. No lab coats required—just curiosity, a clean hypothesis, and a willingness to iterate.

Define goals and hypotheses for WordPress A/B testing

Everything useful starts with a clear destination. Before you tinker with headlines or CTA colors, translate your big-picture aim into a SMART goal: Specific, Measurable, Achievable, Relevant, Time-bound. I once had a team say “we want more engagement.” Great—now make it real: “Increase average time on page for our how-to posts by 20% within six weeks.” That gives you an actual yardstick.

From there, write falsifiable, single-variable hypotheses. A good hypothesis states what you’ll change, the expected direction and size of the effect, and how you’ll measure it. Example: “If we change the H2 to a benefits-oriented subhead, then internal link CTR from that section will rise by 10% within 10 days.” If you mix five changes into one test, you’ve basically cooked up a mystery casserole—delicious, but you won’t know which spice did the trick.

Also document assumptions and risks. Are you assuming search traffic is stable? That mobile users will behave like desktop users? Note seasonality (holidays), referral campaigns, and any analytics quirks. Lastly, rank tests by expected impact and effort: a headline tweak that could bump CTR by 8% is often a far better first experiment than a full layout redesign that costs a week of engineering time.

Choose experiments that move the needle (low-hanging fruit)

Start where your visitors actually look and click. The biggest returns usually come from visible, decision-heavy elements: headlines, meta descriptions, featured images, hero CTAs, and signup forms. These are the parts of the page that shout at readers—ignore them at your peril. I treat these as my “frontline” experiments because they’re cheap, fast, and meaningful.

Keep tests focused: one variable per experiment. For headlines, try 2–3 variants. For CTAs, swap copy (“Get the guide” vs “Download now”), color, or placement—but only one change at a time unless you intentionally plan a multivariate test. If traffic is modest, group similar pages (e.g., top 5 how-to posts) and run the same headline test across the group to reach sample size faster. If traffic’s heavy, you can run multiple experiments in parallel—just be careful of interactions.

Make a backlog with one-sentence hypotheses, estimated lift, and effort. Rank by impact/effort and check feasibility: what’s the baseline conversion, and how long will a test take to reach significance? Aim for realistic lift targets (5–15% for many small tweaks). That way you spend time on experiments that actually matter, not on digital feng shui.

Set up testing infrastructure on WordPress

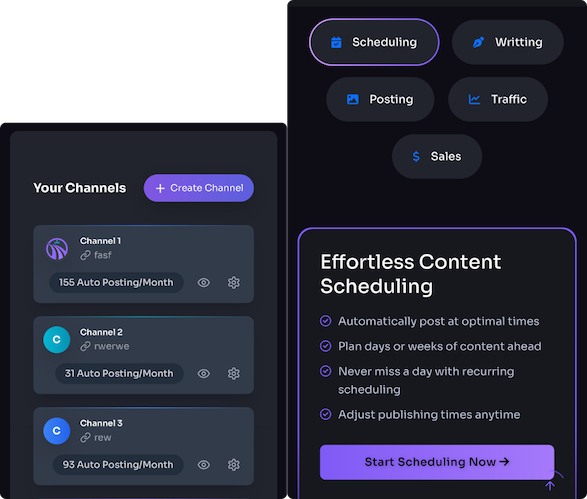

Pick tools that match the scope. For small in-dashboard experiments, WordPress plugins like Nelio A/B Testing or Thrive Optimize let you create variants, split traffic, and read basic reports without hacking your theme. For cross-domain tracking, heavier attribution, or advanced multivariate tests, look to external platforms such as Optimizely or VWO. If you use GA4, you can also wire experiments into your analytics stack for unified reporting—just be careful to set up consistent event tracking.

Decide scope and tracking up front. Before you flip the switch, define pages, variants, traffic split (50/50 is common), the primary metric (CTR, time on page, signups), audience segmentation, test duration, and the minimum detectable effect. A winner isn’t just a numerical winner—it must pass your pre-declared decision rules. I’ve seen teams cheer an apparent win on day two only to discover it evaporated by week two when mobile traffic corrected the imbalance. Don’t be that team.

For a tidy workflow: integrate the tester with your WordPress admin, hook test events into GA4 or your analytics, and set up UTM tagging for variants. Tools like Nelio automate many steps, and services such as Trafficontent can generate copy variants and social previews to speed experiments. Remember: the easier the process, the more likely you’ll keep testing.

Launch quick-win tests you can deploy in an hour

Want momentum? Run an hour-sized experiment that teaches you something concrete. Pick one visible element, build a control and one variant, split traffic evenly, and watch the results. I do this when I want an immediate sense of whether an idea has legs; it’s like tossing a pebble into a pond to see if the ripples reach the shore.

Example quick tests you can deploy in an hour:

- Two headline variants on a high-impression post (measured by CTR and time on page).

- Swap your hero image to a more topical or human photo to test visual engagement and scroll depth.

- Change CTA color (blue → orange) keeping copy identical to measure click-throughs.

- Swap meta description copy to test SERP CTR if you control the page’s search snippet via title/description changes or Search Console experiments.

Keep the variant simple: one exact change. Split traffic 50/50 and run for 3–7 days or until you reach a minimum sample size. Verify tracking events fire on both versions and tag visits so your analytics can tell variants apart. If you see a lift, celebrate—but only after checking secondary metrics. If you don’t, you’ve still learned something cheap and fast. Either way, don’t waste an hour if you haven’t already mapped the metric and threshold you’ll use to judge success.

Analyze results like a pro

Analysis is where tests stop being experiments and start being lessons. Your primary job is to decide whether the signal is real, practically meaningful, and repeatable. Begin with statistical significance—commonly a p-value threshold around 0.05—but don’t worship p-values. Focus on practical uplift. An improvement of 0.5% might be statistically significant on huge traffic, but is it worth the rollout headache?

Always check secondary metrics and funnel context. A headline that raises CTR but doubles bounce rate is a false friend. Look at time on page, scroll depth, referral sources, and conversion events. Segment results by device and traffic source—mobile behavior often differs sharply from desktop. Also check for confounders: did a campaign or a spike in social shares during the test tilt results? If a pattern only shows on one weekday or from one referrer, dig deeper before calling a winner.

Document everything: hypothesis, sample size, confidence intervals, segments, and a judgment (win, lose, inconclusive). I keep a simple results log where each entry records the decision rule used and the actionable outcome. If a variant wins, roll it out and monitor for a few weeks—some lifts stick, others regress. If it loses, treat it like free information: you just eliminated a bad idea without breaking the site.

Turn results into SEO gains

Short version: when users click more and stay longer, search engines take notice. That’s not sorcery; it’s mapping user behavior to signals search engines can interpret as relevance. When a page shows improved CTR and dwell time, it becomes easier to justify boosting it in your content calendar or refining its metadata for better SERP performance.

Operational steps to convert winners into SEO wins:

- Update title tags and meta descriptions on winning pages so SERP snippets reflect the tested variant. If a new headline won, craft the page title to carry that benefit and the primary keyword.

- Adjust on-page elements—H1/H2s, image alt text, and schema—so search engines see the same user-facing signals you tested.

- Use internal linking to channel authority to the improved page; anchor text should reflect the tested angle.

- Track rankings and impressions in Search Console after rollout. Patience is required—rank changes often lag engagement improvements by weeks.

Finally, scale insights to similar pages: if a CTA wording or headline structure worked on one high-impression post, test or roll it across other pages in that topical cluster. That compound effect—small wins multiplied across many pages—is where a modest testing program turns into a meaningful SEO advantage.

Common pitfalls to dodge and how to fix them

Testing is a minefield of tempting shortcuts. Here are the potholes I trip over when I’m not paying attention—so you don’t have to.

- Too many variables at once: If you change copy, image, and layout in one test, congratulations—you ran a great exploratory study and zero useful conclusions. Fix: isolate one variable per test.

- Insufficient traffic or too-short duration: Short windows produce noisy data. Fix: calculate sample size ahead of time; aggregate similar pages if traffic is light; run the test longer.

- Seasonality and campaign contamination: A holiday sale or a viral post can skew results. Fix: avoid launching major tests during known spikes and note external events in your test log.

- Cherry-picking early winners: Day-two “wins” often evaporate. Fix: set a minimum test duration and sample before declaring winners.

- No post-rollout monitoring: A rolled-out change can behave differently at scale. Fix: monitor metrics for 2–4 weeks after rollout and be ready to revert if adverse effects appear.

Think of your testing process as a kitchen: don’t throw every spice into the pot and hope for a masterpiece. Slow, staged tweaks, documented assumptions, and clear stop rules keep your data honest and your team sane.

Create a repeatable WordPress A/B testing plan (template)

Repeatability is the secret sauce. Once you’ve proven a couple of ideas, formalize a simple sprint template so anyone on the team can run a sensible test without re-inventing the wheel. Here’s a starter two-week sprint that I use with small teams.

Sprint plan (2 weeks):

- Week 0 — Backlog & prioritization (1 day): Pull 6–8 hypotheses into a shared doc. Rank by impact/effort. Choose 2–3 quick tests for the sprint.

- Week 1 — Setup & launch (2–3 days): Implement variants using your WordPress plugin or external tool. Set up tracking, UTMs, and segments. Start tests with a 50/50 split unless you need a 70/30 ramp.

- Week 1–2 — Run & monitor (7–10 days): Verify events daily, but don’t jump to conclusions. Document anomalies (campaigns, outages).

- End of Week 2 — Analyze & decide (1–2 days): Apply your pre-declared decision rules: win (roll out), lose (document and close), or inconclusive (extend/scale).

Quick-start checklist:

- Goal & primary metric defined (e.g., CTR up 8% in 14 days).

- Single-variable hypothesis written with expected lift.

- Testing tool integrated and events mapped to GA4 / analytics.

- Split and duration set; minimum sample size calculated.

- Results logged and decision recorded.

Use a simple results log with columns: page, hypothesis, control, variant, sample size, primary metric lift, p-value/confidence, decision, and next steps. If a winner emerges, ship it across sibling pages and add follow-up tests to the backlog. If not, file the learning and move on—testing is triage, not therapy.

Next steps: get started with a tiny experiment today

If you haven’t run a test yet, here’s a tiny, actionable next step: pick a top-impression post, write one clear alternative headline that promises a benefit, and run a 50/50 headline test for 7–10 days. Track SERP CTR, time on page, and scroll depth. If the winning headline lifts CTR and keeps readers scrolling, publish it as the canonical title, update the meta description, and roll the headline formula into two more posts. That’s how compound gains begin—one small, evidence-backed change at a time.

Resources I use and recommend:

- Nelio A/B Testing (WordPress plugin) — great for in-dashboard experiments.

- Optimizely — robust platform for cross-domain and advanced tests.

- GA4: A/B testing and experiments — for analytics-aligned experimentation.

Now go pick a headline, change one thing, and learn something. You’ll probably be surprised how much a tiny tweak can do—like discovering your coffee tastes better if you actually remember to put the lid on the mug. If you want, tell me the page and the two headlines you’re considering; I’ll give a quick gut-check on which variant I’d bet on (and why).