Shopify stores live or die on product pages: they need to be discoverable, indexable, and fast. This playbook walks through the concrete, Shopify-specific changes you should make to ensure search engines find, render, and rank your product pages — and that shoppers convert once they arrive. I’ll show what to edit in themes, which checks to run in Google Search Console, how to tune Core Web Vitals, how to serve valid product schema, and how to automate ongoing monitoring so problems don’t sneak up on you. ⏱️ 11-min read

This guide is written for store owners and technical SEOs who want repeatable, low-friction workflows. Where applicable I’ll point to practical theme edits and give examples of automation using Trafficontent to publish SEO-aligned content and keep internal linking healthy.

Crawlability foundations for Shopify product pages

Shopify’s URL patterns and navigation determine how bots discover product pages. Standard routes are predictable: collections appear under /collections/collection-handle and products at /products/product-handle (plus parameterized variants like ?variant=123). Bots start at your homepage, follow top navigation and featured collections, and then reach individual products. If a product is not reachable via at least one collection or menu link it becomes an orphan — discoverability drops and indexing will be unreliable.

Practical theme edits that improve crawl paths:

- Ensure collection templates output clean anchor links to product canonical URLs:

/products/{{ product.handle }}. If your product tiles use JS to navigate, make sure there’s a real<a href=…>anchor for crawlers. - Keep the important navigation shallow. Avoid burying products behind many clicks or inside client-side only widgets. Favor category landing pages that list products with static HTML link elements.

- Move critical product text (title, price, short description, availability) into server-rendered HTML instead of loading it after page load via AJAX. Bots and pre-renderers often don’t execute complex client-side flows reliably.

- Audit apps and theme includes for blocked resources. If CSS/JS files are served from third-party hosts, ensure they’re crawlable and not returning 403/404s to bots.

Run a focused crawl with Screaming Frog or Sitebulb, specifying the /products/ path to map how pages are reached from collections and menus. Flag any product with zero inbound internal links as an immediate priority to fix — either add it to an existing collection or create a featured list page that links to it.

Indexing signals and ensuring product pages get indexed

Indexability is mostly about clear signals: no stray noindex tags, correct canonicalization, and reliable sitemap coverage. Shopify normally generates sensible defaults, but theme customizations, apps, or SEO tools can accidentally inject meta robots tags or conflicting canonical tags that cause pages to be excluded.

Use this practical checklist to get product pages indexed and monitor progress:

- Inspect a sample product in Google Search Console (GSC): open URL Inspection to see “Coverage” status, whether the page was crawled and indexed, and whether Google sees the HTML and structured data as expected.

- Remove accidental noindex directives: search your theme for

meta name="robots"and confirm product templates do not add “noindex” unless intentional. - Canonical alignment: ensure the product template outputs

<link rel="canonical" href="https://yourdomain.com/products/product-handle" />and that variant query parameters are not the canonical destination. - Submit and monitor sitemaps: Shopify exposes /sitemap.xml (and product sitemaps like /sitemap_products_1.xml). Submit the main sitemap index to GSC and re-submit after bulk catalog changes.

- Avoid filter and variant duplicates: when filters create unique URLs (e.g., ?color=red&page=2), use canonical tags pointing to the clean product or collection URL. Use GSC’s URL Parameters tool sparingly to signal ignored parameters.

- After fixes, use GSC’s “Request Indexing” for high-priority product updates and check the Coverage report for “Crawled - currently not indexed” or “Excluded by robots meta”.

Indexing is not instant. Track index status over weeks and prioritize high-revenue SKUs for manual inspection and immediate reindexing requests. For a mid-size catalog, focus first on top-selling and seasonal products so the crawl budget is spent where it matters.

Speed optimization for Shopify product pages (Core Web Vitals)

Core Web Vitals — LCP, FID (or INP in newer guidance), and CLS — are measurable ways to quantify page experience. On product pages, the hero image, price, and CTA are the visible elements that determine LCP and CLS. The challenge is keeping rich media while minimizing render work.

Actionable steps to improve Core Web Vitals on Shopify:

- Optimize hero and product images: use Shopify’s image CDN to serve automatically sized images. Deliver WebP where supported and set responsive image sizes (1x/2x). Target desktop hero images around 1200–1400px wide (not 3000px) to speed LCP.

- Preload critical assets: preload the hero image and essential web fonts with

<link rel="preload" as="image" href="…">andrel="preload" as="font". Inline critical CSS for above-the-fold content to avoid rendering delays. - Minimize render-blocking JS/CSS: defer non-critical scripts, split heavy app scripts into app-embed or async loads, and remove unused CSS. Audit apps and remove or replace those that inject large, blocking resources.

- Lazy load below-the-fold media: for carousels and secondary product images, use native

loading="lazy"or a lightweight lazy-loading script to reduce initial payload. - Limit third-party scripts: analytics, chat, and review widgets often drag LCP and FID. Move non-essential third-party scripts to delayed loading after interactive readiness or replace them with leaner alternatives.

Shopify-specific tips: use Online Store 2.0 app blocks and app-embed controls to reduce hard-coded script injections, rely on Shopify’s global CDN for assets, and version-control theme changes in a staging branch. Validate changes using Lighthouse, PageSpeed Insights, and the Core Web Vitals report in Search Console. Run Lighthouse lab tests for the top 50 product pages and track LCP targets under 2.5s and CLS under 0.1 where possible.

Structured data and product schema for rich results

Product schema is one of the fastest ways to make product pages clearer to search engines and to become eligible for rich results. Shopify themes can output JSON-LD that pulls directly from product objects so it always matches the visible page content.

Implementation checklist and best practices:

- Use a single, concise JSON-LD Product block. Include name, description, image (same URL shown on page), sku, url, and an offers object with price, priceCurrency, and availability. If you surface reviews, add aggregateRating with ratingValue and reviewCount.

- Bind schema fields to Shopify variables so they reflect live product data. Example (conceptual): use

{{ product.title }},{{ product.url }},{{ product.price | money }}, and inventory status to populate JSON-LD automatically. - Maintain parity between schema and visible content. If schema says “in stock” but the page shows “backorder”, Google may ignore rich results or flag inconsistencies.

- For variant-rich products, either output a single offers object representing the current default variant or list multiple offers for significant variant-specific differences. Be careful: duplicating identical offers across many variants can create noise; prefer one canonical offer unless variants differ materially.

Quick example of the structure (trimmed for illustration):

{

"@context": "https://schema.org/",

"@type": "Product",

"name": "Classic Leather Wallet",

"image": ["https://example.com/products/wallet-1.jpg"],

"sku": "WALLET-001",

"offers": {

"@type": "Offer",

"priceCurrency": "USD",

"price": "49.00",

"availability": "https://schema.org/InStock",

"url": "https://yourstore.com/products/wallet"

},

"aggregateRating": {

"@type": "AggregateRating",

"ratingValue": "4.6",

"reviewCount": "132"

}

}

Validate each page with Google’s Rich Results Test and re-run periodically after theme or app changes. Keep an automated check in your crawl to ensure schema exists and matches the visible product metadata.

Canonicalization, URLs, and duplicate content management

Variant URLs and collection-specific links often create multiple URLs with essentially the same content. Without a consistent canonical strategy, ranking signals fragment and crawl budget is wasted. The simplest, most robust approach for most stores is to canonicalize all variant and filtered URLs to the clean product URL.

Implementation guidance:

- On product pages, output a canonical tag pointing to the primary product URL without query parameters:

<link rel="canonical" href="https://yourdomain.com/products/product-handle" />. Do this in your product.liquid or main product template. - If collection pages generate alternate product URLs (e.g., /collections/sale/products/product-handle), keep product page canonical pointing to /products/product-handle so Google knows which to index.

- For variant selectors that use

?variant=, ensure those parameters are not made canonical. Use canonical tags or, when appropriate, block parameterized URLs from indexing using the GSC URL Parameters tool (use cautiously). - Design safe redirects for retired or merged products: implement 301s from the old product URL to the nearest relevant live product or parent category, and document these redirects in a spreadsheet so marketing and content teams understand legacy links should be updated.

- When a variant truly deserves its own indexed URL (unique copy, very different images, or significantly different specs), treat it as a unique product: canonicalize accordingly, surface unique content, and add it to the sitemap as an independent URL.

Operational tip: maintain a canonical map in a CSV/Sheet mapping product handles to their canonical target and review it after large imports or theme changes. Include canonical checks in your automated crawl so a sudden influx of parameterized indexed URLs triggers an alert.

Robots.txt, sitemaps, and crawl-budget hygiene

Shopify serves a dynamic robots.txt at yourdomain.com/robots.txt. The default blocks admin, checkout, and account areas and otherwise allows access. Still, every store should verify robots rules after installing apps or proxying assets, because blocked CSS/JS/images can prevent proper rendering and cause indexing problems.

What to check and how to act:

- Open

/robots.txtand confirm that/products/and/collections/are allowed. Use Google’s robots testing tool to confirm URLs and critical resources (CSS, JS) are not being blocked. - Don’t block static assets needed for rendering. If robots.txt denies access to fonts, CSS, or JS hosted on subdomains, Googlebot may see an unstyled page and miss important content.

- Verify sitemap coverage: Shopify creates a sitemap index and splits large sitemaps (products, collections, pages). Submit the sitemap index to GSC and spot-check product sitemaps (e.g., /sitemap_products_1.xml) to ensure all products are listed and have 200 responses.

- Prune low-value pages: thin category pages, internal search result pages, or duplicate filter pages should be removed, consolidated, or noindexed to focus crawl budget on pages that convert. Fix redirect chains — every redirect is crawl waste.

- Monitor Crawl Stats in GSC to detect spikes or drops in crawl rates, and use Coverage reports to fix 404s and server errors promptly. Orphaned pages (present in sitemap but not linked from anywhere) should be relinked or removed.

When you perform bulk catalog updates, re-submit your sitemap and run a targeted crawl to ensure no new 4xx/5xx responses slipped into production. Small, regular hygiene prevents crawl budget from being wasted on stale or low-value URLs.

Automation, monitoring, and ongoing optimization workflow

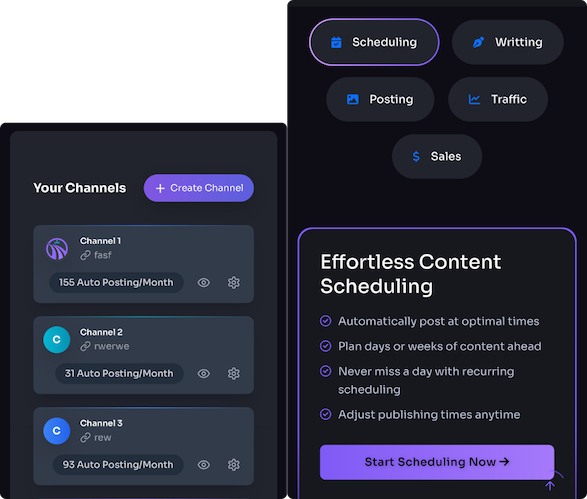

Technical SEO is not a one-and-done sprint — it’s a steady cadence of monitoring, testing, and incremental improvements. Automate the heavy lifting so you can focus on high-impact work. Trafficontent can play a central role in this loop by connecting Shopify signals to content publishing and internal linking workflows.

A repeatable automation-first workflow:

- Daily/weekly crawls: schedule Screaming Frog or Sitebulb runs focused on /products/, /collections/, and /sitemap_products_*.xml. Export actionable issues: noindex tags, broken canonicals, missing schema, and blocked resources.

- Integrate data sources: pull GSC coverage and Core Web Vitals, Lighthouse lab metrics from PageSpeed Insights, and Shopify product exports into a single dashboard (Looker Studio). Map issues to product handles for precise ownership.

- Alerts and SLAs: when LCP crosses a threshold, or a top-50 product becomes “Crawled — currently not indexed,” trigger Slack/email alerts with a suggested fix and owner. Automate ticket creation in your project tracker for high-priority fixes.

- Trafficontent-driven content and linking: connect Trafficontent to Shopify to schedule and publish SEO-focused content (buying guides, comparison posts, how-to articles). Use Trafficontent’s auto-publish or draft-scheduling to create weekly content that links to priority products, forming topical clusters that reinforce product relevance.

- Automated internal linking: leverage Trafficontent templates to inject contextually relevant internal links to product pages when a new blog or guide is published. This reduces manual linking work and ensures product pages get frequent fresh internal inbound links.

- Continuous validation: after deploying fixes or publishing content, re-run URL Inspection requests via the GSC API for key product pages (or use GSC’s UI for priority items) and monitor index status changes over the following weeks.

Example use case: you identify 25 under-indexed but top-selling SKUs. Use Trafficontent to generate five short buying guides (one per category) that include internal links to those SKUs. Schedule publishing over two weeks, run daily crawls to confirm internal links are discovered, and watch index coverage and organic impressions in Search Console. This automation loop reduced manual effort and increased index coverage in real-world tests.

Next step: run a single Screaming Frog crawl of your top 200 SKUs, submit your sitemap in GSC, and set up a Trafficontent campaign to publish one SEO guide per collection this month. Track index coverage and Core Web Vitals for those pages, and iterate from concrete data rather than guesswork.