When you add AI to your keyword workflow—generating ideas, drafting meta, or auto-publishing product content—you need a measurement plan that proves the work moves business metrics, not just rankings. This guide walks Shopify merchants and WordPress content managers through a practical, repeatable approach: set SMART goals, wire Trafficontent into your stack, track the right KPIs, and turn insight into action with dashboards and experiments. ⏱️ 13-min read

You’ll get concrete integrations (GA4, Search Console, Looker Studio), data-hygiene rules, efficiency KPIs for automation, recommended alert thresholds, and an operational playbook for common outcomes. Read this as a playbook you can implement over 30/90/180-day windows to demonstrate that AI-assisted keyword work actually grows organic traffic and revenue.

Set clear goals and a KPI framework for AI-driven keyword work

Start by translating business outcomes—traffic, conversions, AOV—into a compact set of SMART goals tied to the AI process. For example: “Increase organic sessions from AI-identified keywords by 20% in 180 days and lift revenue attributed to those pages by 12%.” That single sentence defines what success looks like, how it will be measured, and the timeframe for evaluation. Assign an owner who will be responsible for the measurement and follow-up.

Break goals into leading and lagging indicators. Leading indicators—keyword rank movement, impressions, and search CTR—show whether content is visible and compelling in the search results. Lagging indicators—conversions, revenue per visitor, and customer lifetime value—show whether visibility is translating into business impact. Track both sets to forecast outcomes and to validate the cause-effect relationship after you roll out AI changes.

Map KPIs to the funnel: discovery (impressions, new keyword coverage), consideration (click-through rate, time on page, scroll depth), and action (landing-page conversion rate, revenue per visitor). For ecommerce, add SKU-level revenue and cart-to-checkout rates for pages targeted by AI. Set target timeframes that match your cadence: quick wins in 30 days for meta updates, 90 days for content ranking improvements, and 180 days for full revenue impact on competitive terms.

Before you publish a single AI-optimized post, capture baselines: current organic sessions for target pages, average position for selected keywords, CTR, conversion rates, and revenue per visitor. Baselines make it possible to calculate lift and give you confidence when you claim an AI-driven win.

Core SEO and ecommerce KPIs to track

Keep your KPI list short and decisive. The metrics you must watch are organic sessions (by landing page), Search Console impressions and average position (by query), click-through rate for search snippets, percentage of target keywords in top 3/10/30, landing-page conversion rate, revenue per visitor, and SKU-level revenue connected to AI-targeted pages. Each metric answers a different question: visibility, appeal, intent alignment, and business outcome.

Use Google Search Console to track impressions, clicks, and average position for the exact queries the AI identified. If impressions are rising but clicks aren’t, that’s a CTR optimization problem—rewrite title tags and meta descriptions, add benefit-led snippets, or implement structured data to enhance rich results. Rank trackers like Ahrefs or SEMrush are useful for monitoring broader ranking trends and keyword coverage, particularly for competitive target terms that the AI surfaces.

For ecommerce attribution, implement GA4 ecommerce events (view_item, add_to_cart, purchase) and compute revenue per organic session and SKU-level revenue tied to specific landing pages. A true “keyword strategy win” is when a cluster of AI-targeted keywords shows a pattern: rising impressions → improved CTR → better rankings → increased conversion rate and incremental revenue. If only rankings move without revenue, prioritize improving the consideration and action layer (copy, UX, CTAs).

Finally, define the thresholds for success. For many stores, a reasonable rule is: 10–20% lift in impressions or clicks within 90 days, 5–10% increase in landing-page conversion rate within 180 days, or clear revenue gains per SKU within the first 180 days. Use these thresholds as gating criteria before scaling any AI-driven template or batch.

Technical and UX metrics that affect keyword performance

SEO doesn’t live in a vacuum. Technical and UX factors directly influence whether your AI-optimized content can rank and convert. Monitor indexation and sitemap coverage first: ensure the pages you publish are discoverable by Google. A sudden drop in indexed pages after an auto-publish batch often points to a sitemap or robots configuration error and will kill visibility for entire keyword cohorts.

Core Web Vitals—Largest Contentful Paint (LCP), First Input Delay (FID) or Interaction to Next Paint (INP), and Cumulative Layout Shift (CLS)—are ranking and engagement signals. Track these at page level for your highest-value templates (product pages, category landing pages, and top AI-written posts). Pages with poor vitals typically see higher bounce rates and lower dwell time, which undermines your keyword work even if the content is semantically relevant.

Other technical checks include canonicalization, structured data/schema (product, FAQ, breadcrumb), crawl errors surfaced by GSC, and mobile responsiveness. Use Screaming Frog or site crawlers to audit internal linking and ensure your AI-generated pages receive internal links that distribute authority. If a page rises in rank but conversion drops, inspect layout shifts, mobile UX, or a change in CTA placement that might have been introduced by templating or auto-publish rendering.

Finally, tie technical metrics to SEO outcomes in your dashboard. When a page’s LCP improves after an image-optimization pass, watch for accompanying CTR or engagement gains. If indexation regresses by more than 5% after an automated rollout, trigger a rollback and a root-cause investigation—these concrete rules keep automation from creating systemic issues.

Toolset and integrations: wiring Trafficontent, WordPress, Shopify and analytics

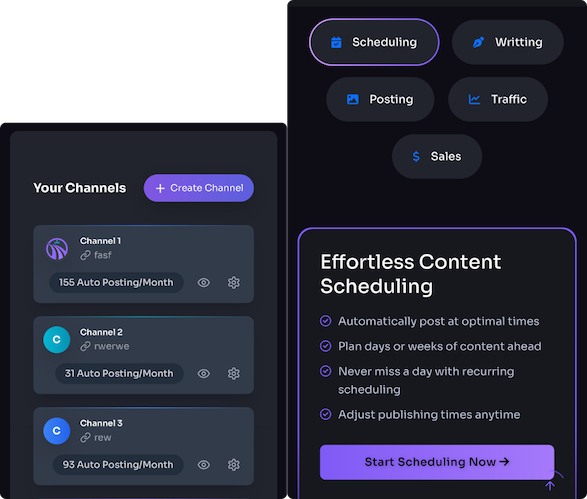

Build a measurement pipeline that connects Trafficontent, your CMS (WordPress or Shopify), and analytics platforms. On WordPress, install the Trafficontent plugin to push keyword briefs, meta templates, and internal linking suggestions directly into the editor. For Shopify, use Trafficontent’s integration to sync product briefs and schedule auto-publish jobs to your store. These integrations let your AI tool write the content and tag it with identifiers you can track downstream.

Set GA4 as your analytics backbone and link it to Google Search Console for search performance. Enable enhanced measurement in GA4 so core events are captured, and add ecommerce measurement for Shopify or WooCommerce. Feed GA4 and Search Console into a Looker Studio dashboard to combine impressions, clicks, sessions, and revenue in one view. Pull rank data from Ahrefs/SEMrush and page-level technical signals from Screaming Frog into the same dashboard for a single source of truth.

Use Trafficontent’s event hooks or dataLayer pushes to tag content as “AI-generated” or attach a keyword cohort ID. When Trafficontent auto-publishes a batch, append a UTM or an internal query parameter to the URL so sessions can be associated with that work in GA4. For Shopify, make sure the Trafficontent integration records the draft->publish lifecycle and keeps a reference to the original keyword brief: that lineage is essential for attribution.

Finally, schedule bi-directional feedback loops: feed performance metrics (CTR, time on page, conversions) back into Trafficontent to prioritize future briefs. This close integration turns Trafficontent from a content generator into a closed optimization system, where the AI learns which intents and snippets deliver revenue and which need rewrites.

Data hygiene and tagging for reliable attribution

Reliable KPIs start with consistent naming and tracking conventions. Standardize content taxonomy: assign a content type (product, category, blog), a keyword cohort ID, and a consistent slug pattern. Slugs should be human-readable and stable so historical links remain valid. When Trafficontent publishes in bulk, ensure it follows your slug rules and preserves canonical tags to avoid duplicate-content problems.

UTM naming standards are crucial for cross-channel attribution. Create a UTM schema for scheduled social posts and email sends that references the Trafficontent campaign and the keyword cohort (e.g., utm_source=instagram&utm_medium=social&utm_campaign=tc_kw_home-fix_2025). This allows you to separate organic search sessions driven by keyword work from promotional traffic sourced by schedulers or social pushes.

For ecommerce tracking, implement GA4 ecommerce events accurately: view_item, add_to_cart, begin_checkout, purchase. Prefer server-side tagging or GTM with server containers to reduce ad-blocking noise and improve purchase attribution. Tag purchases with the landing page ID or keyword cohort so revenue can be attributed back to the AI target. Validate your pipeline by running “known-value” tests: make a small test purchase through an instrumented page and confirm it shows up in GA4 with the correct campaign and cohort tags.

Before trusting KPIs, run a data-quality checklist: verify Search Console matches GA4 organic landing page sessions within reasonable bounds, check for broken UTMs or missing cohort IDs, and ensure no unintended redirects strip your parameters. Small hygiene wins—consistent slugs, UTMs, and event tags—prevent big attribution errors that can either falsely credit or penalize your AI efforts.

Measuring the impact of automation and AI on workflow efficiency

Beyond traffic and revenue, measure how Trafficontent and automation change your team’s throughput and quality. Create efficiency KPIs: publish velocity (articles/products published per week), time spent per post (research, drafting, QA), rollback or error incidents, and content throughput (number of keyword clusters covered per month). Baseline these metrics before automation and measure time saved after enabling Trafficontent’s auto-publish and templating.

Quantify time savings with concrete examples. If a manual product page requires four hours to research, write, and QA, and Trafficontent’s AI with a template reduces that to one hour, that’s three hours saved per page. Multiply by your monthly volume—if you publish 50 pages a month, that’s 150 hours saved (nearly four full-time weeks). Capture these as “hours/month saved” and convert to cost savings for stakeholders using your average hourly rate.

Track quality signals too: engagement (time on page, scroll depth), bounce rate, and the rate of content rollbacks or major edits after publication. A rise in publish velocity paired with stable or improved engagement suggests your automation is both faster and better. If velocity increases but engagement falls, investigate template quality: are CTAs buried? Is the content too generic? Use feedback loops—Trafficontent can receive engagement metrics to prioritize better prompts and briefs.

Finally, report efficiency gains alongside revenue impact. A dashboard that shows “X hours saved per month” and “Y% revenue uplift from AI cohorts” makes it easy for operations and finance to justify further investment in automation and to scale Trafficontent usage across categories or regions.

Experimentation and validation: A/B tests and controlled comparisons

To separate correlation from causation, run controlled tests. Use headline/meta A/B tests in Search Console or a server-side variant testing tool where possible to measure CTR and sessions differences. For product pages, run template vs. manual trials: randomly assign SKUs to the AI template cohort and a manual cohort, then compare conversion rates and revenue per visitor over a 90-day window. Randomization and a control group are the simplest ways to isolate AI impact.

Be realistic about sample sizes. For CTR tests, you need impressions; small or niche pages will take longer to reach statistical power. As a rule of thumb, aim for at least several thousand impressions per variant for CTR tests and several hundred sessions per variant for conversion-rate tests. Use an online A/B test calculator to determine required sample size based on baseline metrics and the minimum detectable effect you care about (for example, detect a 10% lift in CTR or a 5% lift in conversion rate).

Track the right KPIs for each test: CTR and clicks for meta/headline experiments, time on page and scroll depth for content layout tests, and add_to_cart and purchase (conversion events) for product page experiments. Capture secondary signals—bounce rate, page speed, or error rates—to ensure there are no negative side effects. If a template variant shows better CTR but worse conversion, you either tweak the template or run a follow-up test focused on the consideration/checkout experience.

Finally, incorporate learnings into Trafficontent briefs. When a headline formula or schema markup consistently wins, bake that into your AI templates. When a template underperforms on high-AOV products, exclude those SKUs from auto-publish and route them to a manual workflow. The goal of testing is to create a decision library that scales your wins and avoids repeating mistakes.

Dashboards, reporting cadence and alert thresholds

Design a dashboard in Looker Studio that merges GA4, Search Console, and rank-tracker exports to give a single view of AI-driven keyword cohorts. The dashboard should spotlight trend lines for impressions, clicks, average position, landing-page conversion rate, and revenue per session by cohort. Use color sparingly: green for sustained improvements, amber for volatility, and red for actionable declines.

Set a reporting cadence that balances vigilance with focus: weekly monitoring for operational alerts (publishing errors, indexation regressions, major ranking drops), and monthly business reviews that synthesize impact—traffic lift, revenue attribution, and efficiency gains. Quarterly deep dives should feed strategy adjustments, template updates, and roadmap decisions for Trafficontent usage.

Configure concrete alert thresholds to reduce noise but catch real problems: notify on organic traffic drops >15% week-over-week for major cohorts, indexation loss of >5% of AI-generated pages, ranking declines of >10 positions for core target keywords, or a spike in rollback incidents over a week. For revenue-sensitive pages, create stricter alerts (e.g., revenue per visitor decline >10%). Ensure alerts include the cohort ID and page list so owners can triage quickly.

Keep stakeholder reports simple: for execs, show ROI—hours saved, revenue lift, and cost to produce content. For content teams, surface top keywords, low-CTR pages that need titles, and pages flagged for technical fixes. For developers, present Core Web Vitals regressions and indexation issues. Different lenses keep the team aligned and focused on remedial actions, not data overload.

Operational playbook: turn insights into optimizations

When your dashboard surfaces an issue, follow a concise, repeatable playbook. First, prioritize by revenue uplift: sort pages by expected incremental revenue if improved (current traffic × conversion uplift × AOV). Tackle the highest-impact pages first. For low CTR on product pages: A/B test title tag variants, add benefit statements to meta descriptions, and implement FAQ schema to enhance snippets.

For new keyword opportunities surfaced by Trafficontent, create a rollout plan: draft a keyword brief, generate content via the AI, schedule publishing in batches, and link to related category pages to pass internal authority. Monitor impressions and CTR in the first 30 days—if impressions rise but CTR stalls, iterate on meta and schema. If rankings miss expectations at 90 days, expand internal linking and add supporting content to signal relevance.

When templates underperform, run a controlled rollback: pause auto-publish for the affected template, analyze engagement metrics and technical logs, and run a small rework on sample pages. Re-deploy only after a successful A/B test or an uplift in secondary metrics such as dwell time and add_to_cart. Always schedule a 30/90/180 follow-up measurement window to capture short- and medium-term impact.

Operational rigor—clear prioritization, narrowly scoped tests, and scheduled follow-ups—lets you scale AI-driven keyword work without amplifying mistakes. Use Trafficontent’s cohort IDs and publishing metadata to maintain visibility into what was deployed, when, and by whom. That lineage makes troubleshooting fast and keeps automation accountable.

Next step: pick one high-impact keyword cluster, set a SMART goal for 90 days, wire Trafficontent into your CMS and GA4, and build a Looker Studio view that tracks impressions → CTR → conversions. Run one A/B meta test and log the time saved per page—those two experiments will validate both performance and efficiency in a month.