If you're running a WordPress store or content site in 2025, technical SEO is no longer a set-and-forget checklist. Speed, Core Web Vitals, and crawl budget interact: a slow server hurts LCP, oversized images create CLS problems, and thousands of unnecessary indexable pages waste crawl budget and bury your important product pages. This article gives a practical, repeatable blueprint that integrates those three priorities into a workflow you can run weekly or automate with tools like Trafficontent. ⏱️ 12-min read

Expect clear targets, concrete configuration advice, plugin recommendations, and an automation pattern that turns monitoring into action. I’ll show how to measure a baseline, fix the common bottlenecks on WordPress, and then automate the checks and task creation so your team spends time implementing fixes — not chasing alerts.

Speed foundations for WordPress sites

Speed is the baseline for both user experience and the Core Web Vitals that Google looks at. Start by creating a measurable baseline: run lab tests with Lighthouse or PageSpeed Insights and grab field data from Google Search Console's Core Web Vitals report. Capture LCP, CLS, and TBT/INP for your top 20 pages (home, major categories, and highest-converting product pages). Those numbers are your north star when you prioritize fixes.

Next, reduce server work and render-blocking time. Practical steps: upgrade to PHP 8+, enable OPcache, and use a lean theme that avoids heavy page builders. Implement multi-layer caching — page caching (WP Rocket or an equivalent), object caching (Redis), and a CDN edge layer (Cloudflare or StackPath). These layers cut TTFB and reduce the amount of JavaScript that must execute before paint. Aim for TTFB below 200–300 ms under normal load; if your host consistently delivers 500+ ms, move or scale up.

Minimize render-blocking CSS/JS: let your caching plugin handle minification, but treat bundling as an experiment — combining many scripts can sometimes hurt HTTP/2 or HTTP/3 parallelism. Inline critical CSS for the above-the-fold content and defer noncritical CSS/JS. For fonts, use font-display: swap and preload the most important font variants to avoid text invisibility. For busy stores, lazy-load below-the-fold images and iframes; leave critical hero media un-lazy-loaded so LCP is not delayed.

Finally, measure perceived speed, not just technical scores. Tools like WebPageTest’s Largest Contentful Paint filmstrip and a real-user metrics dashboard (combine Lighthouse lab scores with field data from GSC and GA4) help you prioritize changes that users actually feel. With a clear baseline and these tactics, you'll close the biggest gaps quickly.

Server, hosting, and infrastructure choices for WordPress speed

Your hosting and stack choices shape everything else. For most stores and mid-size content sites, managed WordPress hosts (Kinsta, WP Engine, SiteGround) remove a lot of configuration burden and often ship with edge caching, HTTP/3, and tuned PHP stacks. If you prefer VPS control (DigitalOcean, Linode, AWS Lightsail), provision enough CPU headroom and enable NGINX, PHP 8.x, and OPcache. Always verify that HTTP/2 or HTTP/3 is supported and that TLS is modern (TLS 1.2+ with current ciphers).

Measure TTFB across typical traffic patterns. A good rule of thumb: sub-200–300 ms under load, with room to breathe during traffic spikes. If your host autos-scales or provides predictable performance tiers, plan for seasonal peaks (product launches or holiday promotions). For ecommerce, edge caching at the CDN level matters: static assets and cacheable pages should be served from the edge, while dynamic checkout flows are handled by origin with short cache lifetimes.

Consider edge logic or a CDN that supports edge rules — e.g., Cloudflare Workers or similar — to offload heavy work. Use a CDN that can automatically deliver WebP/AVIF variants and do image optimization at the edge to reduce origin CPU. If you’re on a constrained budget, at minimum use a CDN for static assets and a well-configured page cache plugin, while planning for a managed host when revenue scales.

Core Web Vitals in WordPress: targets and measurement

Core Web Vitals are precise: LCP ≤ 2.5s, FID (or INP) ≤ 100ms, and CLS ≤ 0.1. Treat those thresholds as pass/fail gates for your priority pages. Field data (Search Console and Chrome UX Report) tells you what real users experience. Lab data (Lighthouse or PageSpeed Insights) is how you reproduce and diagnose issues locally. Use both — field data to prioritize, lab data to debug.

Start with LCP: the largest above-the-fold element (often a hero image or product image) must render quickly. Fixes that move the needle include reducing server response time, serving compressed/modern image formats, preloading the hero image, and trimming critical CSS. Example: swapping a 1.2MB hero JPEG for a 200–300KB AVIF or WebP often shaves a full second or more from LCP on mobile.

CLS (visual stability) is about layout shifts. Reserve dimensions on img and iframe elements with width/height attributes or CSS aspect-ratio, preload fonts or use font-display swap to avoid invisible text, and avoid dynamically injecting content above existing content (ads, popups, or late-loading banners). For long-tail stores, attachment pages and plugin-inserted content are common culprits — audit generated markup that alters layout on load.

Interactivity (FID/INP) depends on long tasks caused by heavy JavaScript. Use code-splitting, defer or async noncritical scripts, and offload heavy third-party scripts. For ecommerce checkouts, single-page-app behaviors can increase long tasks — measure with the Performance panel in Chrome DevTools and use worklets or web workers where applicable. Track INP where FID is no longer available; target ≤100ms for first input latency and aim to reduce long tasks to improve responsiveness.

Image and media optimization for speed and CWV

Images are often your largest page assets and a direct lever for LCP and CLS. Use modern formats — WebP is widely supported and AVIF is gaining support for even smaller files — and set up an automated conversion pipeline so authors don’t need to think about formats. Many plugins (Imagify, ShortPixel, Smush) can convert on upload and generate fallback formats when needed.

Responsive images with srcset and sizes let the browser select the best image for the viewport. Make sure your theme outputs the various sizes WordPress generates (thumbnail, medium, large, 2x/3x for retina). Avoid uploading one massive file and letting CSS scale it down; that wastes bandwidth. Also explicitly set width and height attributes or use CSS aspect-ratio to reserve space and prevent layout shifts — a simple step that often fixes CLS issues for hero images and product galleries.

Lazy loading is essential for reducing initial page weight, but apply it selectively: native loading="lazy" is excellent for below-the-fold images and iframes, but do not lazy-load the hero image that defines LCP. Use a CDN that supports automatic image transformation and caching at the edge to serve optimized images closest to users. For product-heavy pages, consider “progressive loading” — load visible thumbnails at higher quality and lower-priority images at smaller sizes.

Practical compression settings: for photographic images, try quality ranges of 70–85% for JPEG or the equivalent in WebP/AVIF, then visually test; for icons, use SVG. Automate this in your workflow: configure your image plugin to compress on upload, trigger a bulk optimization job for legacy assets, and ensure backups are kept before mass conversions. A CDN that offers format negotiation reduces complexity on the server while improving real-world load times.

Crawl budget strategy for WordPress

Crawl budget matters most on large sites: if crawlers keep hitting low-value pages (tag archives, admin endpoints, or thousands of near-duplicate product variations), they spend less time discovering your fresh, revenue-driving pages. Start with a crawl audit (Screaming Frog, Sitebulb) and a log-file analysis to see what bots are actually fetching. Identify over-crawled patterns and low-value endpoints.

Prune and control indexation. Use robots.txt to disallow administrative endpoints (/wp-admin/, /wp-login.php) and low-value query-parameter paths, but be careful not to block CSS/JS that the crawler needs to render pages. Use XML sitemaps (generated by Yoast or Rank Math) that list only canonical, high-value URLs and exclude tag, author, and empty archive pages. Where relevant, add noindex to paginated archives or thin attachment pages that provide no unique value.

Streamline redirects and remove duplicate content. A proliferation of 301 chains wastes bot time and dilutes link equity. Consolidate variations with canonical tags and stable URLs. For ecommerce filters that create many parameterized URLs, use the rel="canonical" pattern and, when necessary, mark them as noindex or block via robots.txt after evaluating user-facing needs. For high-product catalogs, a consistent URL scheme and strong internal linking are critical to guide crawlers toward priority pages.

Improve internal linking and architecture: keep your important pages within three clicks of the homepage, create topic hub pages that link to related content, and use descriptive anchors. This shallow architecture both helps users and reduces crawl depth. Finally, review your sitemap and robots.txt after major content updates and resubmit the sitemap in Search Console to prompt efficient recrawling of changed content.

WordPress plugins and automation for technical SEO

Choose plugins that produce clean, performant output and automate the repetitive work. For caching and minification, WP Rocket is a common choice; if you need free alternatives, combine a CDN with W3 Total Cache cautiously. For image optimization, pick one automated service (Imagify, ShortPixel, or Smush) and configure it to convert to WebP/AVIF on upload. For SEO, Yoast and Rank Math both generate sitemaps, structured data, and robots.txt rules — configure them to exclude thin or duplicate pages.

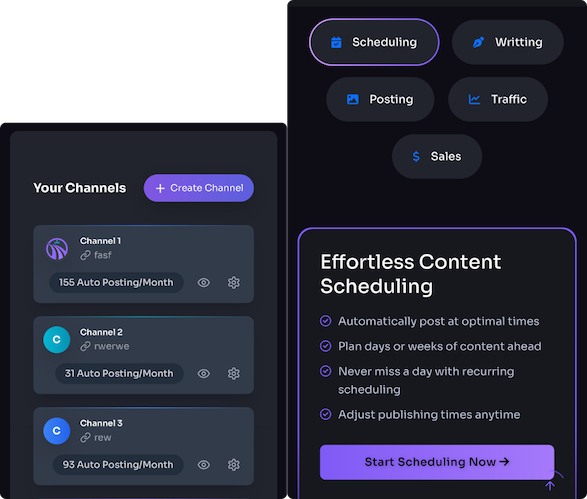

Automation is where Trafficontent fits neatly into a WordPress technical SEO routine. Use Trafficontent to schedule automated audits, generate tasks, and manage the publishing pipeline so technical checks happen before new content goes live. Example workflow: when an author schedules a product or blog post, Trafficontent triggers an image-optimization check, ensures alt text is present, runs a Lighthouse snapshot on the staging URL, and only publishes when the page’s LCP and CLS metrics meet your policy. That prevents regressions from entering production and saves developers from reactive firefighting.

Other practical automations: set Trafficontent to refresh sitemaps and ping Google when content is published; create a weekly job that pulls Core Web Vitals data from Search Console, aggregates anomalies, and opens tickets in your issue tracker for pages that fail thresholds; and auto-notify the team via Slack when regressions are detected. Pair these automations with staging tests and a one-click rollback plan (host snapshots or UpdraftPlus) so changes can be reverted safely.

Configure plugin settings for CWV-friendly output: disable heavy features you don’t use, use lazy-loading selectively, and avoid loading plugin assets globally — only enqueue where needed. Periodically audit plugin impact with the Query Monitor plugin or by comparing Lighthouse runs with and without a suspicious plugin enabled.

Measuring impact and ongoing optimization

Measure progress with a concise set of KPIs and dashboards. Track LCP, CLS, and INP (or FID where applicable) for your priority pages, plus TTFB, coverage index, crawl rate, organic impressions, and conversions. Create a Looker Studio dashboard that combines Search Console, GA4, and Lighthouse lab scores so stakeholders see both technical and business impact. Set alert thresholds (for example, LCP > 3s or a sudden dip in impressions) and route alerts to the right owner.

Establish a cadence: a fast weekly loop for quick checks (top 20 pages), a monthly technical crawl (Screaming Frog/Sitebulb) to find 404s, duplicate titles, and redirect chains, and a quarterly deep audit that includes log-file analysis. Document each change and test it with A/B or controlled experiments when possible — change one variable at a time, and measure before/after on a representative set of pages to avoid false conclusions.

Automate the mundane. Use Trafficontent to schedule weekly Lighthouse runs on your highest-traffic pages, aggregate the results, and open tickets for issues that exceed thresholds. Example: when Lighthouse detects a new long task over 200 ms, Trafficontent creates a developer ticket with the trace link and assigns priority. This cuts the time from detection to fix dramatically and keeps audit debt from accumulating.

Keep a living KPI sheet: record baseline values, changes made, dates, and the regression status. After each fix, re-run field-checks and lab audits and capture both Lighthouse snapshots and real-user metrics to confirm the improvement. Over time, this data-driven loop creates a library of fixes that reliably move the needle across different site templates and content types.

Advanced crawl budget and content strategy for WordPress

When a site grows into the thousands or tens of thousands of pages, log-file analysis becomes essential. Logs reveal what bots are fetching, how often, and which endpoints generate errors or heavy server load. Use those insights to apply surgical robots.txt rules or noindex tags. For example, if bots repeatedly request image-attachment pages or paginated tag archives that offer no unique content, mark them noindex and remove them from the sitemap.

Consolidate signals with canonicalization and improved internal linking. If similar product variants create multiple near-identical URLs, canonicalize to the primary SKU or use parameter handling in Google Search Console to indicate how filters should be treated. Build hub pages that gather link equity and act as authoritative gateways to clusters of related content or product lines. This both reduces crawl depth and improves topical relevance.

Balance crawl efficiency with discoverability. Don’t block URLs you want indexed; instead, remove them from the sitemap and apply noindex if they are low value. For large catalogs, consider crawling rules that allow bots to hit product detail pages frequently but limit crawling of filter/sort combinations. Use consistent URL structures and avoid query-parameter proliferation — where filters are essential for users, use canonical tags, structured data, or indexable landing pages for major filter combinations rather than allowing every permutation to be crawled.

Finally, combine these technical actions with an editorial plan that supports crawl-efficiency: schedule content updates to pillars and hubs, tie internal linking tasks into your content calendar, and use Trafficontent to generate and schedule those internal linking updates automatically after major product launches. This keeps the site both discoverable and focused, freeing crawl budget for the pages that drive traffic and revenue.

Next step: pick one critical page set (home, top 5 product pages, top blog posts), run a Lighthouse and log-file check this week, and set up Trafficontent to automate the weekly audit and ticket creation. That small, repeatable loop — measure, fix, automate — is how technical SEO becomes scalable and sustainable.